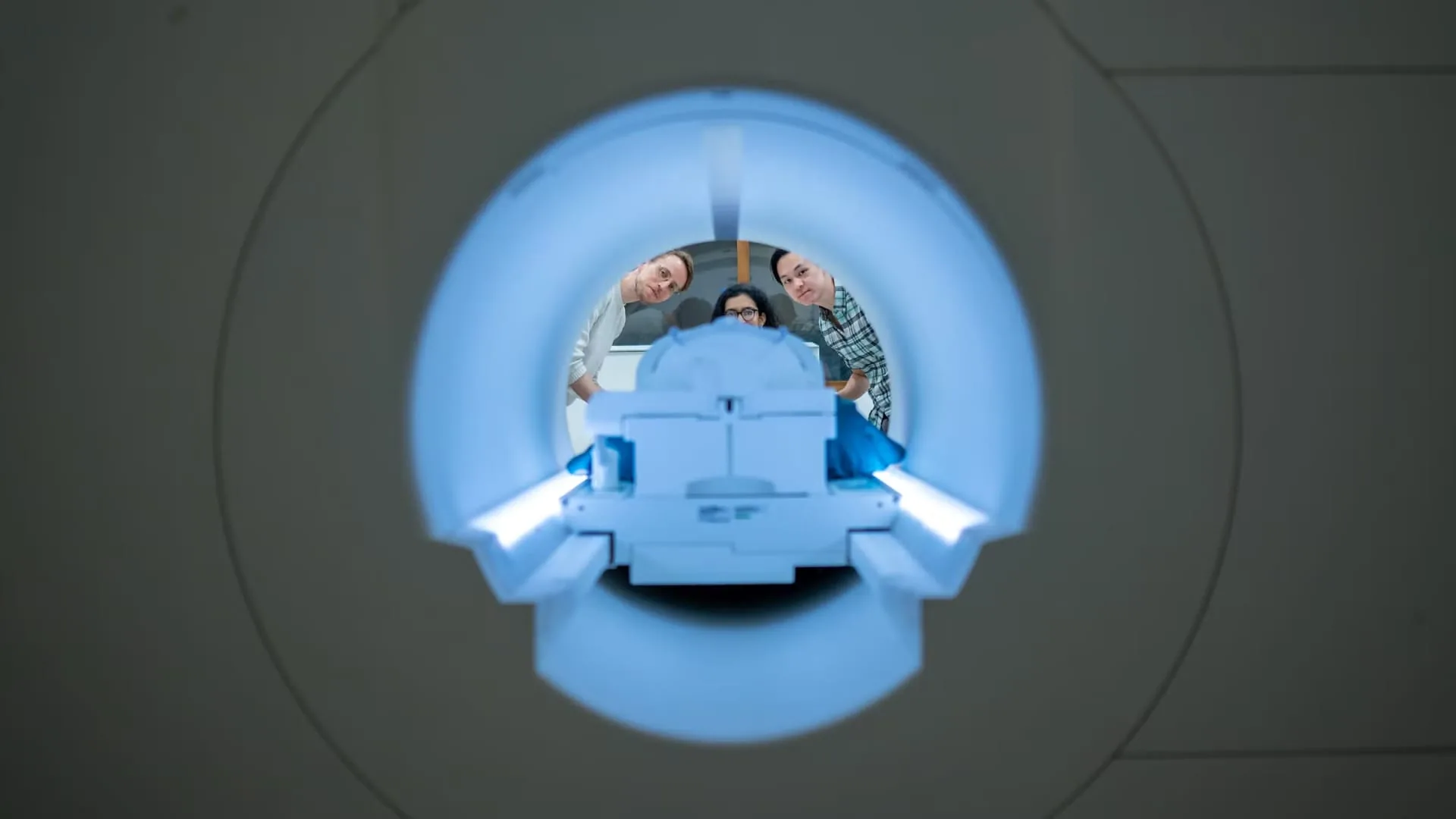

Alex Huth (left), Shailee Jain (center) and Jerry Tang (right) prepare to collect brain activity data at the University of Texas Biomedical Imaging Center at Austin. The researchers trained their semantic decoder using dozens of hours of participants’ brain activity data collected in an fMRI scanner.

Photo: Nolan Zuk/University of Texas at Austin.

Scientists have developed a non-invasive AI system that focuses on translating a person’s brain activity into a stream of text, according to a peer-reviewed study published Monday in the journal Nature Neuroscience.

Dubbed a semantic decoder, the system could ultimately benefit patients who have lost their ability to communicate physically after stroke, paralysis or other degenerative diseases.

Researchers at the University of Texas at Austin developed the system in part using a Transformer model similar to the models supporting Google’s Bard chatbot and OpenAI’s Chatbot ChatGPT.

Study participants trained the decoder by listening to several hours of podcasts in an fMRI scanner, a large device that measures brain activity. The system does not require any surgical implants.

PhD STUDENTS JERRY TANG PREPARE TO COLLECT BRAIN ACTIVITY DATA AT THE UNIVERSITY OF TEXAS BIOMEDICAL IMAGING CENTER IN AUSTIN.

Photo: Nolan Zuk/University of Texas at Austin.

Once trained, the AI system can generate a stream of text as the participant listens or imagines telling a new story. The resulting text is not an exact transcript, but the researchers designed it with the intention of capturing general thoughts or ideas.

According to a press release, about half the time, the trained system produces text that closely or exactly matches the intended meaning of the participant’s original words.

For example, when a participant heard the words “I don’t have a driver’s license yet” during an experiment, the thoughts were translated to “She hasn’t even started learning to drive yet”.

“For a non-invasive method, this is a real advance compared to what has been done before, which typically consists of single words or short sentences,” said Alexander Huth, one of the study’s leaders, in the press release. “We get the model to decode continuous speech over longer periods of time with complicated ideas.”

Participants were also asked to watch four videos with no sound while inside the scanner, and the AI system was able to accurately describe “specific events” from them, the press release said.

As of Monday, the decoder cannot be used outside of a laboratory setting because it relies on the fMRI scanner. However, according to the statement, the researchers believe it could eventually be used via more portable brain imaging systems.

The study’s lead investigators have filed a PCT patent application for this technology.